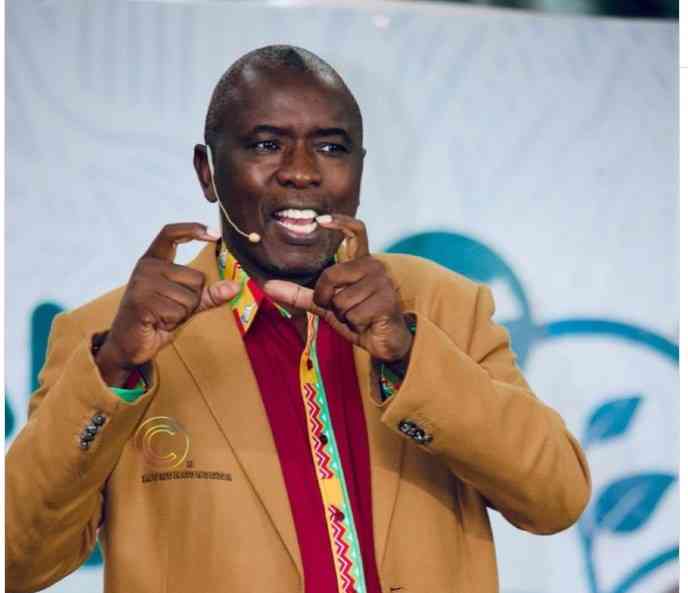

ZIMBABWE’S ICT, Postal and Courier Services minister Tatenda Mavetera recently signalled that government is drafting a policy to ban under-18s from social media platforms.

Like many countries considering similar measures, the intention is understandable — protecting children from cyberbullying, explicit content and online predators. But Zimbabwe should heed the caution raised by South Africa’s Communications minister Solly Malatsi when debating a similar move.

Malatsi warned against “cosmetic interventions that seem like we are doing something” when the State lacks the capacity to enforce them.

His words should give Zimbabwe pause.

What can we learn from Australia?

When I posted about the proposal on Facebook, some users pointed out that Australia had already done it and suggested Zimbabwe could follow suit.

They are correct. In December 2025, Australia implemented the world’s first nationwide ban preventing under-16s from accessing social media platforms.

However, early reports show the policy has been far from airtight.

- Model uses fitness training to fight drug abuse

- Village Rhapsody: Rising suicide cases among men worrying

- Young voters could decide Zim’s 2023 presidential election: Will they?

- Politicians giving us nightmares — Police

Keep Reading

According to media reports, a 13-year-old bypassed Snapchat’s age verification in minutes using her mother’s photo. Another user reportedly uploaded a picture of a dog and was approved by the system. Some teenagers allegedly used photos of Beyoncé to pass age checks.

At the same time, Google searches for virtual private networks (VPNs) surged to a 10-year peak, with one provider reporting Australian installations jumping 400% within 24 hours.

Australia’s eSafety Commissioner acknowledged these limitations, noting that safety laws are not expected to eliminate every breach — comparing them to speed limits or underage drinking laws that are frequently violated but still serve a purpose.

This reveals a fundamental principal–agent problem: governments (principals) cannot perfectly control how platforms (agents) implement rules or how citizens respond.

If Australia — with far greater resources and regulatory capacity — struggles to enforce the policy flawlessly, Zimbabwe must seriously assess its own ability to do so.

Our reality in Zimbabwe

Three structural issues make such a ban even harder to enforce in Zimbabwe.

First is the collective action problem created by shared devices.

In many Zimbabwean households, a single smartphone is used by several family members. A 15-year-old using her mother’s phone will appear online as a 38-year-old adult. Without reliable identity verification linked to devices or SIM cards, age restrictions become nearly impossible to enforce.

A Kenyan data privacy expert recently warned that national ID data contains sensitive information that “should not be in the hands of social media giants looking to train AI.” This highlights a deeper challenge: Zimbabwe lacks the administrative infrastructure to link every online user to a verified identity.

Second, the jurisdictional trap created by weaponised interdependence. Meta has no physical office in Zimbabwe. TikTok's regional presence in Africa is minimal. I understand TikTok has Family Pairing as a parental control feature, something I learnt at the Digital Rights and Inclusion Forum (DRIF 2025) in Zambia.

If platforms decide compliance is too costly, fines may carry little weight. The same Kenyan expert noted that global platforms often maintain “very weak privacy protections for African jurisdictions” and sometimes budget for penalties in regions where enforcement is weak.

This is a classic case of regulatory capture in reverse; instead of businesses capturing regulators, they simply operate beyond reach.

Third, the opportunity cost touches on clientelism and programmatic linkages. In Zimbabwe, WhatsApp is used for sharing homework.

YouTube is where coding is learned. Instagram is where small digital hustles start.

This comes as Unicef provides hundreds of laptops and tablets to disadvantaged schools, building the capacity for digital learning.

As Fiachra McAsey, Unicef Zimbabwe representative, stated, "This handover marks an important milestone in our collective effort to ensure that every child, regardless of where they live, has access to quality digital learning." We cannot both invest in digital inclusion and legislate digital exclusion; that would be a failure of policy coherence.

What other countries are doing better?

Kenya's 2025 regulations require parents to register SIM cards for minors, establishing identity verification before any restrictions take effect.

Children must re-register within 90 days of turning 18 or face suspension. This addresses the credible commitment problem; the State shows it can enforce rules because it has established the administrative framework first.

Lagos state is focusing on content creators, not children. In February 2026, officials warned that involving minors in harmful content has criminal consequences. Titilola Adeniyi-Vivour, permanent secretary of the Domestic and Sexual Violence Agency, clearly stated that "involving minors in content depicting abuse, sexual themes, harmful stereotypes or unsafe scenarios is unethical and violates laws." They are tackling the supply side of harm, recognising that preference falsification won't protect children if harmful content remains accessible.

A smarter path forward

If we want genuine protection, not just superficial measures that amount to preference falsification at the policy level — pretending we're taking action while accomplishing nothing — I propose five specific steps.

First, incorporate digital literacy into every school curriculum. Teaching children to recognise red flags enhances individual skills and tackles the collective challenge of online safety.

Second, follow Kenya's method of setting up registration systems. Connect SIM cards to verified ages before considering bans, allowing for bureaucratic discretion needed for targeted enforcement.

Third, hold platforms responsible for content moderation in all 16 official languages recognised by our constitution, starting with Shona and Ndebele. Demand they take our context seriously, tackling the informational gaps that leave African users vulnerable.

Fourth, follow Lagos by targeting adult content creators who exploit minors. Use existing child protection laws to challenge the structural power of those producing harmful content.

Fifth, partner mobile networks to zero-rate reporting portals so children can report abuse without data charges, tackling the public goods issue of accessible reporting mechanisms.

The moral concern behind this policy is genuine. However, superficial bans that look good in Press releases but accomplish nothing are worse than taking no action at all.

They create a false sense of security while leaving children vulnerable, a clear example of preference falsification at the organisational level. Let's learn from Malatsi, Australia's struggles and our neighbours. Let us create something that truly works.